MUSTANG Data Quality Metrics -- Challenges of Covering the Archive

IRIS Data Management Center has been expanding its Data Quality efforts to providing analysis data for the entire data archive. The MUSTANG system has been developed to take on this challenge and has been steadily gathering statistics for quality control for the past couple of years.

In that time, MUSTANG has found ways to accelerate the processing that it undertakes through software improvements, system tuning, and increased hardware resources. What used to take weeks to complete now takes days. As a result, we are quite far along in covering all of the broadband channels for available networks and we are now processing all networks daily. There are more than 40 statistics, some of which are spectrograms for noise analysis, and the size of the database for MUSTANG now exceeds 5 terabytes.

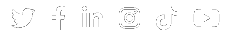

An illustration of how we have been able to accelerate our network coverage can be shown using two separate histograms. The first histogram of networks listing count by percent coverage was taken at the end of September.

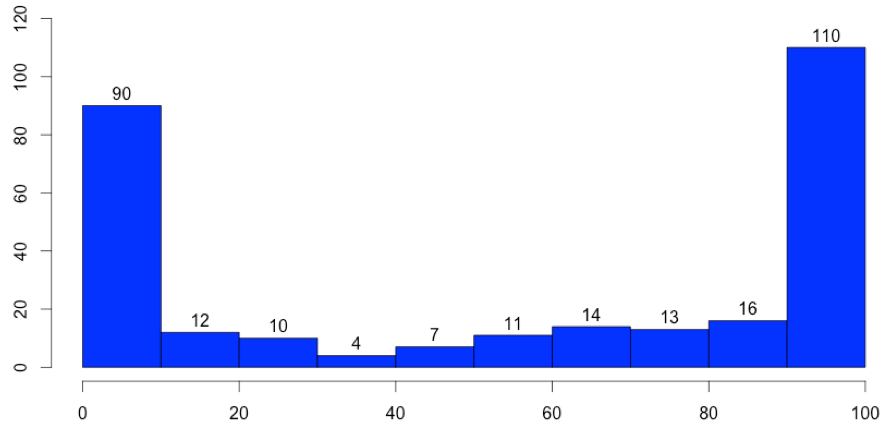

By mid-November, we completed our sweep of international networks and bit into a large chunk of restricted experiment data (PASSCAL), improving our network count and distribution considerably toward the 90 to 100% category. The database has grown more than a terabyte in this short span of time.

We approach the gathering of metrics for MUSTANG in one of two ways:

- Distributed batch processing of an entire network-day, with a backlog queue that covers many days and weeks in a row.

- Scans of the MUSTANG catalog compared to the archive, which are recorded and later selectively polled for recomputation

The first method is how we process metrics in near to real time, running a workflow over selected networks (filtering for broadband channels) through the use of a scheduler that distributes jobs to multiple compute nodes. We have been routinely running about 140 jobs simultaneously, each able to act independently to fetch data from FDSN Web Services and send its results to MUSTANG for storage. We currently allow 1 to 2 days lag to real-time before we begin processing new data.

The scheduler can be set to run for many arbitrary days at a time as well, which is how we queue processing of the archive backlog. MUSTANG has been quite busy just cataloging the 300 TB of data we have at the DMC, going back more than 40 years. This means that we are continually busy looking for the next chunk of the archive to process.

The second method, recomputation, is a continual sustainment effort to keep the metrics in MUSTANG up to date with changes that occur in the archive. This is not as simple as it first sounds because

- The MUSTANG database is already huge and takes time to audit, and

- A lot can change in the archive in a short amount of time

Currently, the recomputation system performs regular polling of an up-to-date catalog that compares MUSTANG metrics to the state of data arriving in the archive. We can run a job at regular intervals that queries the catalog for missing metrics and stale metrics, both of which will tabulate a rerun of the workflow on that target. We have been expanding the reach of this recomputation scan, from the initial IRIS networks to covering all networks.

We currently scan data that are one to two weeks old, about five days after MUSTANG first looks at it. This can help to catch late additions to the real-time feeds and ensure that the data being read in is complete. A second pass is made semi-monthly, scanning the prior month of data for late arrivals and corrections. We know it is not enough to stop there, though.

Because we are processing all of prior years and we can find ourselves updating and adding data for any point in time, we are developing the process for continually scanning all years and acting on the gaps in the metrics. In addition, we will find ways to enhance our sensitivity to other triggers that require some or all metrics to be recomputed: detecting changes in instrument metadata, changes in metrics algorithms, and anomalous metrics entries. These are all capabilities being rolled out over the next few weeks and months.

MUSTANG will keep very busy for some time to come. The ever-growing number of stations in present time, the addition of numerous temporary deployments in delayed time, and the constantly correcting landscape of data and metadata will demand the continued use of resources and expertise at the IRIS DMC to make MUSTANG a reliable and complete analytics catalog for data quality assurance.

by Rob Casey (IRIS Data Management Center)