Migrating the IRIS DMC's services to the cloud

Common Cloud Platform project overview

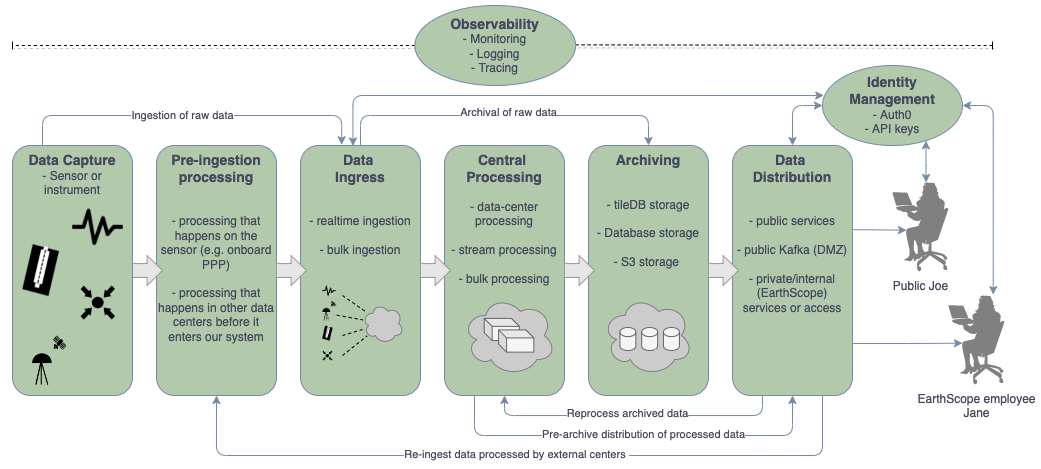

IRIS Data Services has been diligently working with UNAVCO’s Geodetic Data Services to design and build a unified platform for ingestion, archiving, curation, high-level derivative product generation, quality measurements, and distribution in a cloud environment for the operation of a combined data services facility. We refer to this new system as the Common Cloud Platform (CCP). The primary goals include providing higher-capacity services for data users, integrated multi-domain data discovery, and brand new capabilities not possible on the existing systems.

The guiding principles of the project are:

- Start from a “new system” perspective, informed by uses and needs of current facilities

- Support primary access mechanisms currently offered at existing facilities

- Agile design and development methodology

- Common data containers, software and COTS solutions whenever possible

- Include capabilities to support FAIR data principles at a fundamental level

- Common identity management system and strategy

An important early lesson in cloud migration is that robust and efficient operation in such an environment requires adaptation of processes to cloud-native paradigms. In addition, we endeavor to use common systems across data types whenever possible for efficiency, scalable operation, and easier operation. Together, these limit our options for adopting existing systems from the IRIS and UNAVCO data centers.

Current development focus

One large area of effort is the development of data flow systems, from capture, through processing, and to archive repositories. With many hundreds of connections streaming data to the platform, variety of transfer protocols and modes, and development of new archiving pipelines, this is a formidable task. The design allows immediate access to all data that are captured by the platform, from real-time streams to deep archive requests.

Another area of focus is the development of a next generation metadata system. Initially this system will support scientific metadata for seismological and geodetic data. The design allows extension to other metadata types, from Distributed Acoustic Sensing (DAS) to magnetotelluric to future metadata formats. The redesign of this system allows us the opportunity to support of FAIR data principles, such as metadata versioning, as a design goal.

Common, cloud-optimized data containers

One of the most important areas of work is the design of modern, cloud-optimized data formats. Some of the formats that IRIS and UNAVCO currently use, such as the HDF5-based PH5 and text-based formats, are not well suited to analysis in the cloud. We have been developing alternative storage options for these data in TileDB Embedded, which provides a significant level of commonality for different data types even if they are not stored in the same schema and a number of operational advantages over alternatives. The combination of cloud-optimized TileDB and proper schema design will result in our repositories being analysis-ready and cloud-optimized.

Not all data will be stored in TileDB, most miniSEED-based data for example will remain in miniSEED.

Transitioning data users

We are sensitive to the fact that a transition of services from the IRIS DMC to the new EarthScope CCP has the potential to disrupt current data discovery and access patterns. By replicating the web service interfaces, migrating or replacing the most popular web applications, and re-directing connections from the old facility to the new one, we expect the transition to be quite easy for most users, seamless for many.

Furthermore, to ensure that data users are able to take full advantage of the new capabilities offered by the platform, we will be developing training materials and conducting workshops. Excitingly this will include tutorials of how to process data in the cloud, which avoids transferring data over the internet, and the potential of applying nearly unlimited compute power.

Summary

We expect the new data platform to provide integrated, multi-domain data access from a single facility, while supporting a seamless transition for many users. Furthermore, we anticipate offering services and capacity not possible with the current systems. The option of supporting data processing without transferring the data over the internet will be a significant new capability. We anticipate having initial, demonstration-level services available in early 2023, opening the system for user testing in mid-2023 through 2024, and being fully operational in 2024.

by Chad Trabant (IRIS DMC)