Seismic Data Quality Control Workshop Results

In February 2006 and September 2007, the IRIS Data Management System (DMS) sponsored two roundtable workshops on seismic data quality control (QC). The workshops offered seismologists from institutions who scrutinize data associated with IRIS-related projects, (e.g. USArray and the Global Seismic Network) the opportunity to exchange approaches, techniques and insights into current QC practices and challenges.

The 2006 workshop, which took place at the IRIS PASSCAL Instrument Center in Socorro, NM, focused on exchanging QC approaches used at the attending institutions, reviewing common classes of problems (sensor, datalogger, site, metadata, etc.), brainstorming about unresolved observations, and identifying action items for the future. The group resolved to

- continue collaboration and idea exchange online with a list-serv and web-based forum,

- accumulate and publish community QC wisdom to the web-based forum,

- collect or create software tools for QC tasks, such as

- analyzing calibration data,

- summarizing SEED metadata differences, and

- better communicating reported data problems,

- distribute an article to the seismological community describing the results and key observations of the workshop, and

- convene a follow-up workshop the next year.

The 2007 follow-up workshop, held at U.C. San Diego’s Institute of Geophysics and Planetary Physics (IGPP) in La Jolla, CA, featured updates on QC approaches and software tools from attending institutions, a discussion of terminology to clarify problem reporting, and technique presentations on calibration and nearest neighbor analysis. It concluded with a half-day training session on identifying causes of QC issues seen in waveform data and the triage of problems that can be fixed in the field.

This article presents the products, key observations and ongoing issues identified during these two workshops.

Quality Control at Participating Institutions

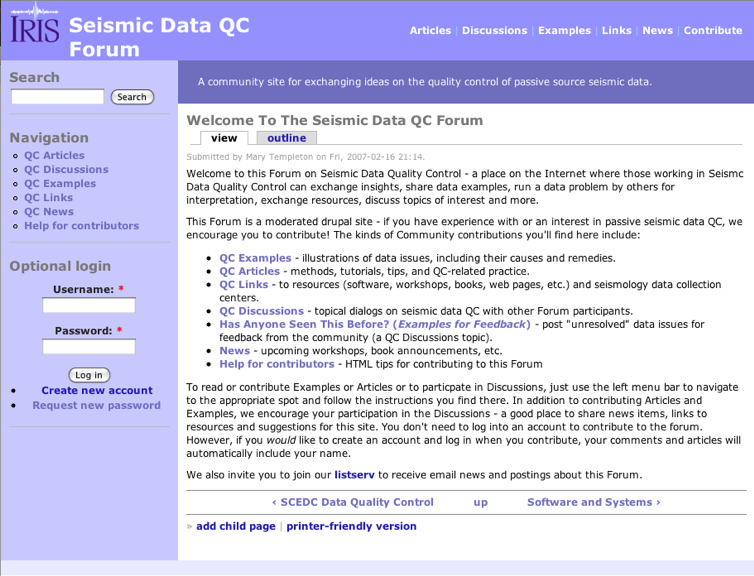

One of the results of the workshops was an exchange of information regarding the quality control measures employed at the represented institutions. The groups that gave presentations at the workshops include the Array Network Facility (ANF), the Advanced National Seismic System (ANSS), the Albuquerque Seismological Laboratory (ASL), the International Deployment of Accelerometers Data Collection Center (IDA DCC), the IRIS Data Management Center (DMC), the PASSCAL Instrument Center (PIC) and Array Operations Facility (AOF), the Plate Boundary Observatory (PBO), the Northern California Earthquake Data Center (NCEDC), and the Caltech Seismological Laboratory (Caltech). The slides for the ANF, PIC, NCEDC, IDA DCC, and Caltech presentations are available at http://ds.iris.edu/QCForum in the QC Articles section. The QCForum is a moderated online forum that is discussed in further detail in the following section. The institutional presentations covered various aspects of seismic QC including data availability and telemetry issues, identification of instrument malfunctions, vault instabilities and local noise sources, and metadata inaccuracies.

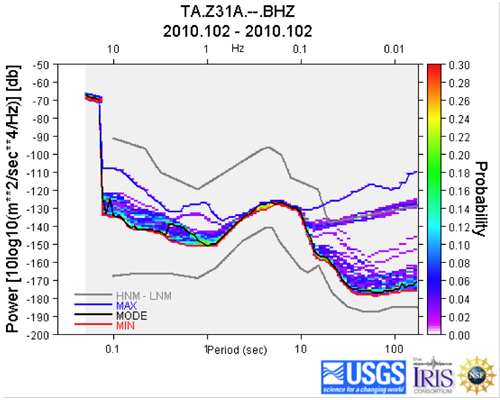

The most basic QC analysis technique is visual waveform inspection in the time domain, which is common at all institutions. Analysts and/or field engineers review waveforms on time scales from hours to weeks while investigating changes in background noise, the occurrence of various noise events, and the recording of earthquakes. The goal is to identify signs of sensor failure, local noise sources, vault degradation including slab tilt and flooding, and data availability. Frequency domain analysis in the form of raw spectra, power spectral densities (PSDs), and probability density functions (PDFs) can lead to the identification and quantification of local noise sources or sensor malfunction. For example, a sudden or gradual increase in the long-period noise level evident in the PDFs can indicate sensor failure or vault degradation. The sudden long-period energy increase in the PSD below alerted network operators to a known sensor problem that they corrected by locking and unlocking the sensor remotely.

sensor problem that they corrected by locking and unlocking the sensor remotely.

PSDs and PDFs are routinely calculated for all data arriving at the IRIS DMC in real-time by the Quality Analysis Control Kit (QUACK).

Many errors in metadata may not be easily discovered when visually scanning waveforms, so additional techniques and tools for identifying these have been created and implemented. Metadata errors may include sensitivity/gain misrepresentation, incorrect orientation of the three sensor components, polarity reversal, incorrect characterization of the instrument via its poles and zeros, incorrect sensor or datalogger type, dates of operation, etc. Some common techniques include the use of calibrations, tidal and event synthetics, particle motion analysis, and PDFs. A new tool for metadata QC that was developed and put into use at the IRIS DMC as a result of the workshops is the perl script “dlessdiff”. It calls rdseed to parse an old and new dataless SEED volume and reports differences between them. dlessdiff is described in more detail on the QCForum under Resources, where it is also available for download.

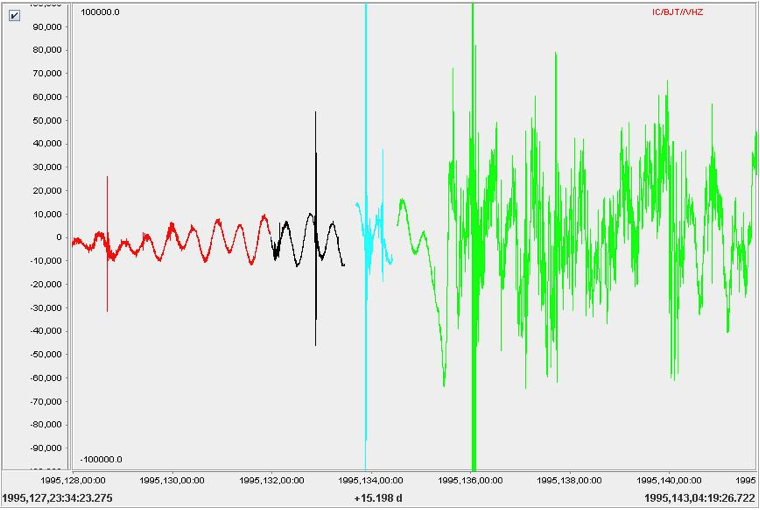

Other QC techniques discussed at the workshops include threshold monitoring of state of health channels, the use of phase picks for timing integrity, and data correction for clock drift or other repairable instances. Differences in quality control measures between institutions are often the result of mission specific considerations and available resources. For example, the PIC relies heavily on pier testing prior to short-term deployments where real time data availability may be limited. Permanent networks such as the GSN can use co-located sensors to separate vault/environmental issues from instrument performance problems. Specific sensor types also affect QC emphasis, e.g. borehole specific issues for PBO or identification of vacuum loss for STS-1 instruments. The figure below shows a drastic increase in long-period noise after day 1995,135 caused by vacuum loss within an STS-1 bell jar.

The QCForum Knowledge Base and Online Community

To facilitate continuing idea exchange and preservation, the group established a

- mailing list (qc-issues@iris.washington.edu) and

- moderated online forum

to showcase group-contributed examples, articles, links, news and discussions related to seismic data QC. Workshop participants and others interested in seismic data QC are encouraged to join (create an account) and contribute. Anyone can peruse the site’s contents.

Areas within the QCForum are described on its opening page and listed on the left menu bar for easy navigation. They include

QC Articles published online or contributed to the forum. These currently fall into the categories

- QC Practice by Institution

- Software and Systems for seismic data QC,

- Data Quality Studies, and

- QC Technique descriptions and tutorials.

QC Discussions give members an opportunity to post unresolved QC issues for feedback or participate in topical discussions. It includes an area for posting suggestions for the online forum.

QC Examples contributed by forum members can be browsed by

- Example title

- Issue type (e.g. sensor, power, site)

- Symptom (e.g. long-period noise, mean amplitude excursions), or

- Technique used for identification (e.g. waveform display, power spectral density)

These examples, several of which were presented at the workshops, serve as a knowledge base of QC issues that have been recognized and addressed within the community.

The QC News board lists upcoming events.

A collection of QC Links points to data centers engaged in seismic data QC and to online resources for analysts.

Communicating Quality Issues Internally and to the User Community

One topic of interest discussed at both workshops was how to better communicate data issues, both internally and to data users. For quality issues that can be fixed in the field, it’s necessary for analysts to convey a clear, timely might impact data use and interpretation, data users need to be alerted.

The most common mode of describing data issues internally is by email, particularly where multiple institutions collaborate. One way to clarify these emails is to use a set of terms that have the same meaning to everyone involved. The group worked to precisely define commonly used terms, such as spike, ping and tilt and discussed the usefulness of approaching problem description in a way that doesn’t assume hardware knowledge on the part of the analyst. The resulting waveform description and terminology proposal is posted for comments as a QCForum discussion item, along with an example that applies the approach to a data problem.

Data Problem Reports (DPRs) are an established mechanism for analysts and network operators to document a problem with data archived at the IRIS DMC. DPRs briefly describe the nature, duration and resolution of a data quality problem. Data users can retrieve DPRs by searching for them by station name, network code or DPR id. DPRs are now being stored in the DMC’s Oracle database to make them easier to distribute in the future.

A second means of communicating data quality issues to data users is through the Station (blockette 51) and Channel (blockette 59) Comments encompassed in SEED metadata. Once added, these comments can be read from a SEED or dataless SEED volume with a SEED reader such as rdseed or extracted directly from the DMC’s Oracle database using SeismiQuery’s comment search.

For those familiar with the data header/quality indicator stored in miniSEED headers, it’s worth clarifying the meaning of the designation Q. Although Q stands for Quality Controlled Data, it does not necessarily attest that that data has been through a universally defined QC process, is problem-free, or has been corrected or modified. Similarly, designations of R or D do not preclude that this data has been reviewed. Generally, R indicates that the data has not been modified. Q-quality data, when it co-exists with R- or D-quality data, is considered to be the best version of the waveforms available.

Future Challenges

As the workshops came to a close, participants agreed that there are still some challenges on the horizon for streamlining and making optimal use of QC staff and findings. They deemed that these challenges would merit further discussion.

One area of continued interest regards how to better utilize QC information. To best benefit network personnel and data users alike, the process must include

- a way for analysts to know they have successfully communicated a problem to the person(s) – field engineers, repair technicians or data management staff – who can mitigate it,

- a way to communicate the discovered cause and resolution of a problem by the person(s) who resolved it back to the QC analyst,

- a mechanism to preserve problem descriptions, causes and resolutions for future internal reference,

- a way to distill the QC information pertinent to data users into a useful form, and

- a way to educate data users about where to find QC information and how to make use of it.

Interest remains in better understanding the pitfalls and QC techniques related to metadata. There is still discussion about how to best utilize sensor calibration measurements and what software is available to do this. Some institutions compare measurements from coincident sensors (see, for example, IDA DCC’s comparison of M2 Tidal signals and the Lamont-Doherty Waveform Quality Center’s inter-sensor coherence analysis [.pdf]). This could be leveraged more frequently to verify instrument response and hardware health where stations have multiple sensors.

Another ongoing challenge concerns linking electrical effects at a station with their waveform responses. Grounding problems, corrosion and deteriorating connections typically find expression in seismic data, but recognizing cause and effect can be difficult.

Finally, it is becoming more common to record sensors that measure atmospheric properties such as pressure, temperature and humidity at seismic stations. Analysts looking at these stations are pondering how data from these sensors reflect station health and how to ensure quality in their waveforms and metadata as well.

Workshop Participants

| Institution | Personnel |

|---|---|

| Caltech Seismological Laboratory, Pasadena, CA | Hauksson, E. and Wu, B. |

| EarthScope USArray Array Network Facility, La Jolla, CA | Astiz, L., Cox, T. and Vernon, F.L. |

| IRIS Data Management Center, Seattle, WA | Benson, R. and Ahern, T. |

| IRIS Global Seismic Network (GSN) | Anderson, K. |

| IRIS GSN/International Deployment of Accelerometers, La Jolla, CA | Davis, P., Klimczak, E. |

| IRIS PASSCAL, USArray Array Operations Facility, Socorro, NM | Arias, E., Barstow, N., Beaudoin, B, Busby, R., Fowler, J., Pfeifer, C., and Sauter, A. |

| Northern California Earthquake Data Center, Berkeley, CA | Uhrhammer, R. |

| UNAVCO, Plate Boundary Observatory, Boulder, CO | Hodgkinson, K. |

| U.S.G.S., GSN, Advanced National Seismic System (ANSS), Golden, CO | Bolton, H. |

| U.S.G.S., GSN, ANSS, Albuquerque, NM | Gee, L., Sandoval, L., Storm, T. and Peyton Thomas**, V. |

(** – now at Caltech)

by Mary Templeton (IRIS Data Management Center) , Gillian Sharer (IRIS Data Management Center) , Peggy Johnson (IRIS Data Management Center) and Chad Trabant (IRIS DMC)